Clinical Trials Platform: Discovery, prototyping and pathfinder user testing.

Discovery & Problem Definition

Working closely with the client team, we set about building a shared understanding of the platform's intended users and the problems it was designed to solve. Our process centred on three interconnected workstreams:

User definition — mapping the range of personas likely to interact with the platform, from trial coordinators and data managers to senior operational leads.

Jobs to be Done (JTBD) — conducting structured interviews and workshops to surface the core functional, emotional and social jobs users needed the platform to perform.

Feature mapping — translating identified jobs into discrete product capabilities, establishing a clear line of sight from user need to product feature.

This structured approach produced a prioritised feature set grounded in genuine user need rather than assumption — a foundation that will help to significantly de-risk subsequent phases of work and give the client team confidence in the product’s direction of travel.

Discovery and Problem Definition

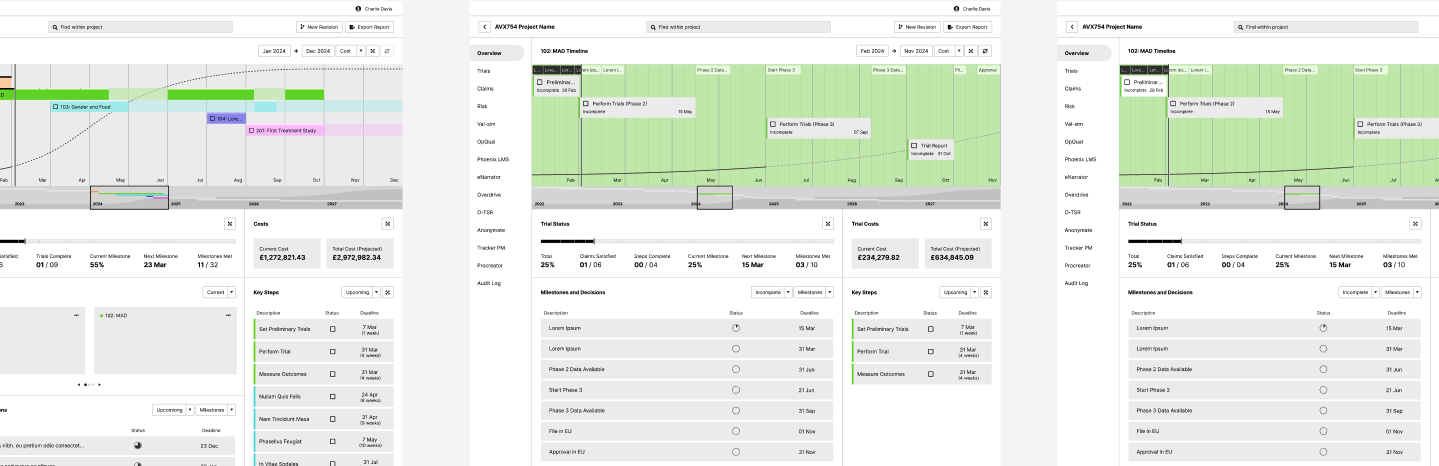

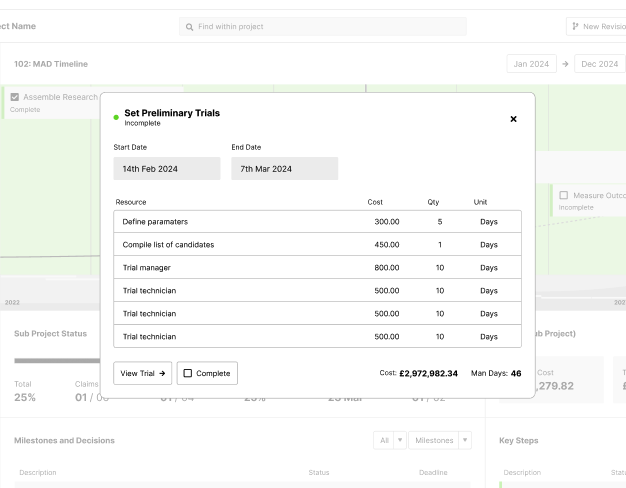

With a clear problem space established, we moved swiftly into prototyping. Leveraging established UI libraries, we were able to work up core screens, experiences and flows without the overhead of building components from scratch.

Rather than attempting to animate or detail the entire product, we were deliberately selective. Our focus fell on the screens and interactions that best represented the platform's unique value proposition — the moments where it most meaningfully departed from the status quo and offered something genuinely better.

This approach kept prototype production lean and ensured that every artefact we created carried real communicative value. Stakeholders and test participants alike were engaging with the parts of the product that mattered most.

Approach Summary: Depth over breadth — fewer screens, done properly, communicate more than a superficial pass across the whole product.

User Testing & Validation

With a working prototype in hand, we recruited a cohort of pathfinder users — individuals closely matching our primary personas — to validate the core design direction. Sessions were structured to assess three things:

Comprehension — did users understand what the platform was, what it offered, and how it worked?

Usability — could users navigate core flows without friction or confusion?

Value — did the prototype credibly demonstrate an improvement on how users currently work?

Feedback was synthesised and fed directly into a more refined version of the prototype, allowing us to address pain points and sharpen the overall experience before progressing to broader feature development.

Iteration & Feature Buildout

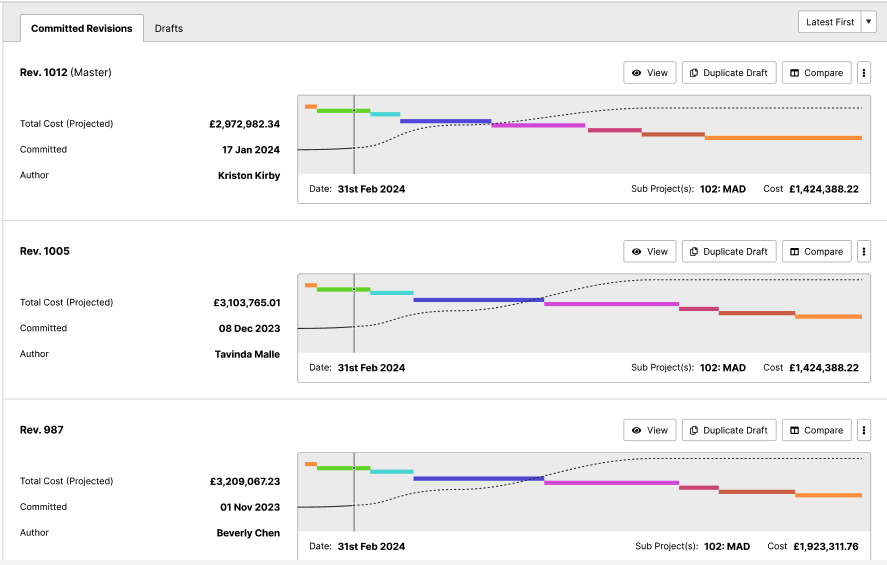

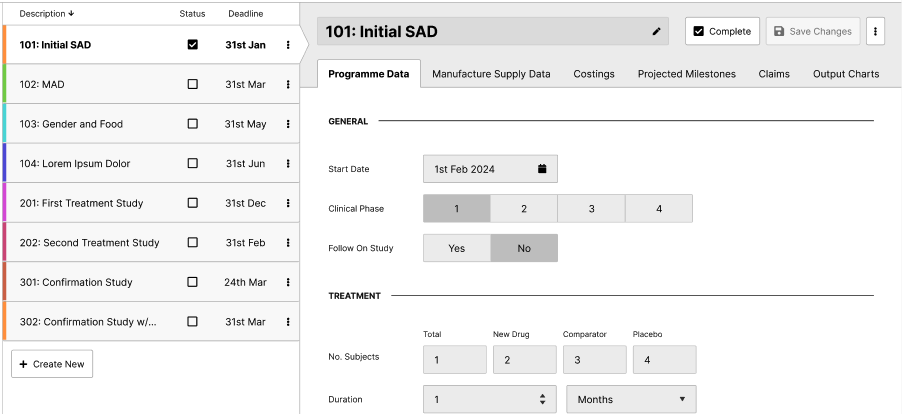

Once a validated design language was established and the core prototype had proven the platform's fundamental proposition, we extended our scope to cover new product modules. Crucially, we did so using the same principles and component foundations we had already developed.

This approach enabled us to move quickly without sacrificing quality. Each new module benefited from the rigour applied in earlier phases, and the cumulative output — a coherent, well-structured design system — gave the client's development team a robust, scalable foundation to build from.

Outcome: A slick, highly usable design system ready to be handed to engineering — covering all core modules and extensible to future functionality.

Building Further Value

The artefacts produced through this engagement have utility well beyond the immediate handover to development. Across our work with clients at similar stages, prototypes and design systems of this quality typically go on to serve several additional purposes:

Investor and board communications — a polished, interactive prototype is a far more compelling demonstration of product vision than a slide deck or written brief.

Commercial pilots and early adopter engagement — the prototype provides a low-risk vehicle for gauging interest and gathering feedback from prospective customers prior to full launch.

Recruitment and team onboarding — a tangible, well-documented design system accelerates the ramp-up of new developers and designers joining the programme.

Regulatory and compliance documentation — structured design artefacts, particularly those rooted in evidenced user needs, can support submissions where demonstration of user-centred design practice is required.

Continued iteration — the design system establishes a scalable framework that evolves with the product, ensuring design quality is maintained as scope grows.

Tools and Methods

The following tools and frameworks underpinned the engagement:

Claude.ai — used throughout discovery and synthesis phases to accelerate analysis, generate structured outputs from qualitative research, and support documentation.

Figma — primary tool for wireflows, prototyping and design system development.

Jobs to be Done (JTBD) framework — structured methodology for user need identification and feature prioritisation.

We use cookies for analytics and marketing. No data is shared with third parties.